NIME, Day 2

Bill Verplank takes the stage

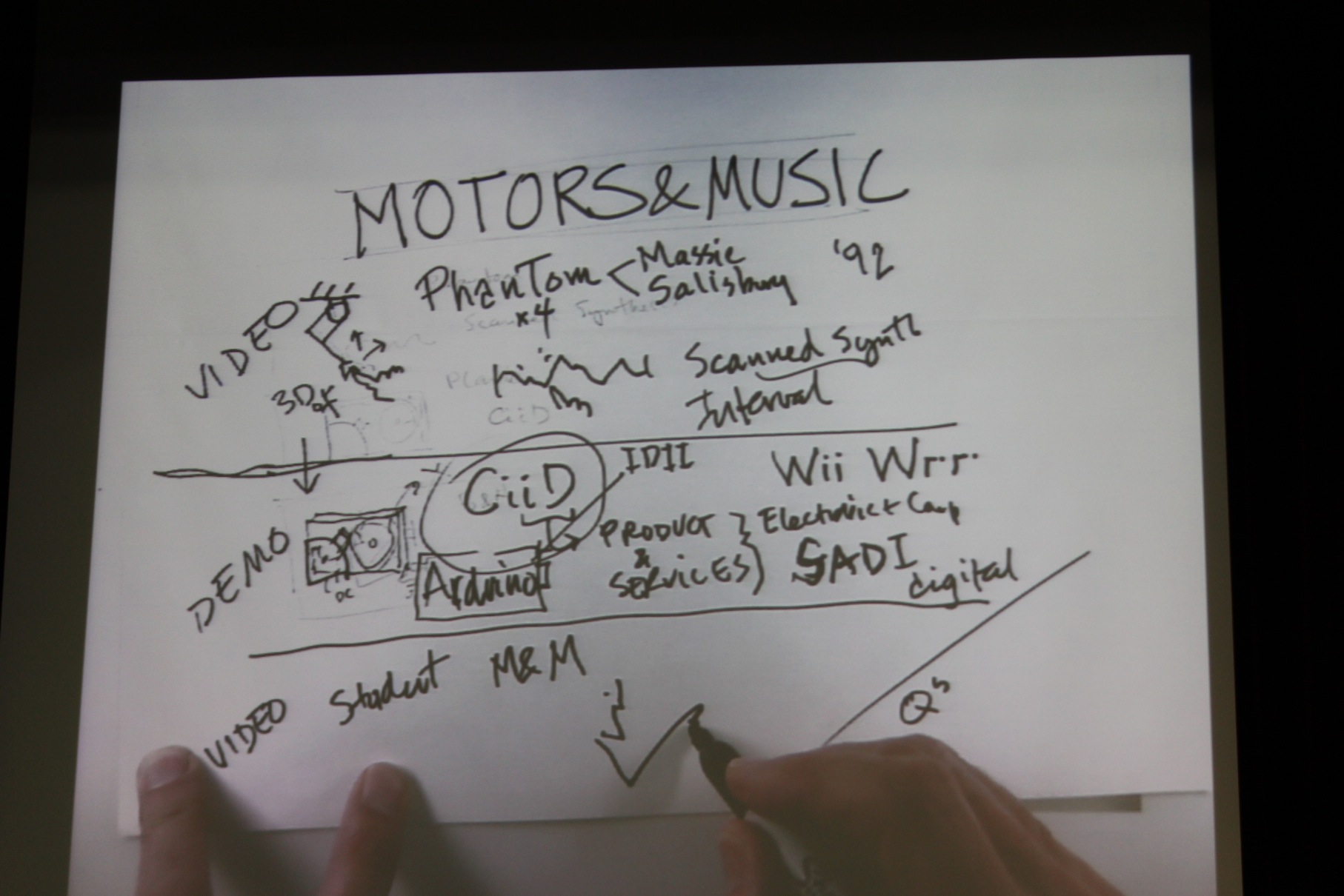

When most people get ready to make a presentation they turn to Powerpoint or Keynote. If they’re of the fixed-gear bicycle persuasion, maybe they reach for something like Prezi. As would become increasingly obvious over the course of his keynote address, Bill Verplank is not most people. The NIME community is known for enthusiastically embracing new technologies. For them, anything older than a Leap Motion is a relic from the Stone Age. Faced with the challenge of holding the attention of such a techno-ravenous group, Bill opted for a revolutionary piece of high-resolution, force feedback presentation hardware. You may have heard of it: it’s called the pen.

Motors and Music

Bill’s talk, called Motors and Music, covers all the things that you would never expect to hear about during a discussion of computer music. Things like physicality. Things like emotive content and embodiment. Things like the ‘80’s. He starts by taking us on a tour of early experiments in computer music interaction. Bill narrates over videos of Max Mathews wiggling a string to trigger scanned synthesis and Perry Cook shaking a chain of bend sensors. It’s amazing to see just how advanced the interfaces were then, and how little has changed since. Sure, these days we might use a MacBook Air instead of a tape reel, but at the end of the day it’s almost as if we’ve lost more than we’ve gained. When I sit down to interact with a computer today, I’m lucky if I’m given a real keyboard as opposed to a simulation under glass. Watching Max Mathews and Perry Cook dancing in front of a wall of mainframes, creating music out of a chain, a string, a pen, and a coffee cup, it’s hard not to feel like we’ve lost something.

Bill now moves from archival footage to the present day. He introduces us to the Plank, one of many haptic toys crafted at the Copenhagen Institute of Interaction Design. Think of it as the atomic unit of force feedback interaction. Built out of a strip of wood and a re-purposed hard drive, it’s a piano key that pushes back.

El Planko

He goes on to show us some of the projects his students have worked on using the Plank. You might think that there wouldn’t be much you could accomplish with one sensor and a single degree of freedom, but then you’d be wrong. Clearly, you’ve never played Angry Birds with a force-feedback slingshot, touched a quantum-entangled pendulum, or played Prosthetic Golf.

As Bill brings his presentation to a close, I fight the urge to rush the stage and take the Plank home for myself. I can’t remember the last time I got so excited about a piece of hardware. I didn’t feel this way when the iPad came out, instead I remember sinking into a fog of disappointment as I realized computer interfaces were moving away from physical interaction, not towards it. We’ve lost a lot of ground since the days of Max Mathews. When the iPhone first appeared people expressed frustration at having to type by tapping on a piece of glass. It doesn’t feel real, they said. I miss having buttons I can touch, they said. Now, Siri and spellcheck have taught our fingers laziness, complacency.

So what happened to our nuanced, multimodal interaction paradigms? If you ask me, the same thing happened to interface design that happened to digital music: convenience beat quality. Given the choice between watching a poorly encoded YouTube clip and patiently downloading a high quality, DRM-laden audio file, most people would rather not wait. In the same way, people are more interested in checking their email 500 times a day from their smartphone than they are in having a two-hour jam session with a force feedback joystick. Researchers like Bill may push advances in interaction design, but it seems to me that makers of consumer electronics will always be more focused on portability and power consumption than on haptic feedback.

Of course, I can’t help myself but wonder what would happen if some company suddenly decided to throw their whole weight behind a new gestural controller. What would they come up with? II feel the first step would be to fortify the woefully impoverished language that we currently use to talk about gesture. Think about it: when it comes to sound we’re able to address all the nuances of spectrum, waveform, frequency domain, timbre, loudness, pitch, attack, envelope and decay, just to name a few. What language do we have for talking about gestures? Slow versus fast, maybe?

Before he leaves the stage, Bill offers us one last quote:

"Grab a hold of something and feel it push back at you and make music"

At this point one of the audience members, inspired by Bill’s august presence, asks a question about toilets.

New Interfaces for Musical Excretion

-->NIME, Day 0

-->NIME, Day 1

by Sam Tarakajian on June 20, 2013