Love Has No Labels

Love Has No Labels is an ad campaign by the Ad Council which addresses our inherent internal biases, diversity, inclusion, and love across classifications. The original video has accumulated over 100 million views between Facebook, YouTube and mirrors combined.

Conceptually, the project required creating a "live X-Ray machine," where all an audience could see was non-descript skeleton figures embracing, kissing, playfully interacting, and so on. Technologically, this implied a live motion capture rig that required little setup, so that actors could easily enter and exit the stage and still have the tech appear relatively invisible.

In using an Xsens IMU (cameraless) mocap setup, the potential for accumulating error and drift in the coordinate system of our digital avatars was a very real concern. This is compounded by using two suits in constant, close proximity relationship to each other. Therefore, we researched how we could re-adjust the position of our skeleton avatars in realtime.

We discovered that Translation parameters (as well as pretty much anything else you'd want to do) are exposed openly in Maya (our CG platform) by the Maya Embedded Language, or MEL.

Furthermore, some of the skeletons' most important gestures - such as opening and closing jaws for laughing, kissing, and talking, and opening and closing hands for waving, holding hands - are not articulated by the motion capture suits. So, our animator exposed meta-parameters for articulating these parts with keyframes indexed from 0 to 10.

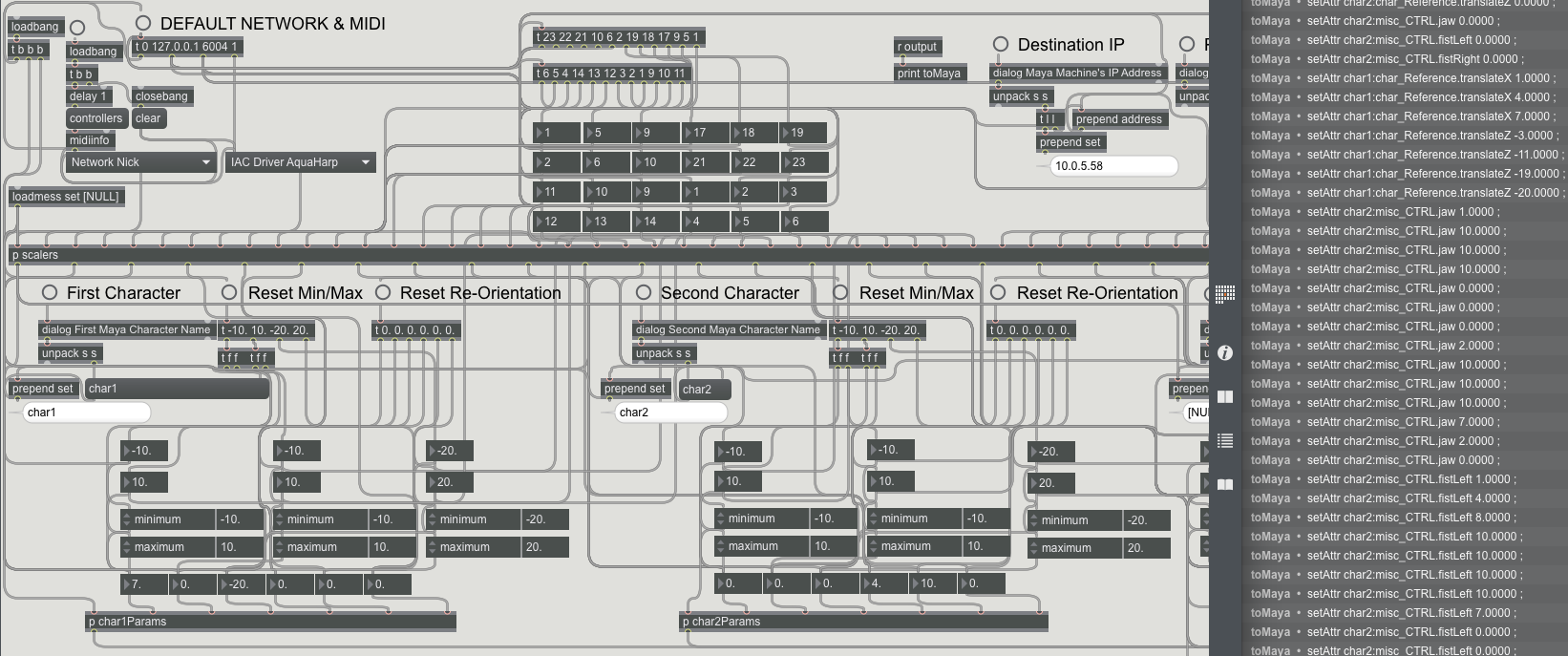

We then created a Max patch that opened up a TCP connection to the Maya rendering computer using a stock mxj TCP object. Over this socket, we took in CCs from a MIDI controller and wrapped them into coherent MEL command strings on their way out.

With eyes on camera feeds inside and outside of the stage box, avatar translation was placed on rotary knobs, and fists and jaws lived on the vertical faders of a Livid Ohm RGB for the most performative articulation. This effectively became a secondary puppeteering stage for our realtime mocap rig.

nice

Noble sentiment, very well expressed.

Year

February 14th, 2015

Location

Santa Monica, CA

Links

Author